From Regulation to Implementation: Translating the EU AI Act into Product Requirements for Medical Devices

The EU AI Act introduces a unified regulatory framework that directly affects how AI-driven medical devices must be designed, validated, and monitored. For many Healthcare and Life Sciences organizations, the AI Act represents both a compliance challenge and an opportunity to improve how AI-enabled medical products are planned and built. Its principles offer a foundation for developing safer, more reliable, and more user-centered AI solutions.

This article examines the practical implications of the AI Act for product development. It explains what the new rules mean for AI-based medical devices, outlines exemplary user stories that translate regulatory clauses into actionable requirements for engineers, and shows how companies can align these requirements with design decisions and supporting evidence.

Introduction

Artificial Intelligence (AI) systems are becoming an integral part of medical technology, from diagnostic imaging tools to clinical decision support software. With the adoption of the EU Artificial Intelligence Act (AI Act), all high-risk AI systems, including medical devices, must comply with a new layer of obligations, including human oversight, transparency, robustness, and accuracy. These are not just abstract principles; they must be reflected in product design and documented within the manufacturer’s technical files.

To make these regulatory requirements actionable and consistent with ISO 13485 and IEC 62304, let us now reframe them into exemplary user stories and product requirements within established software planning and requirements engineering processes, not in isolation. They must also be aligned with defined user groups, since responsibilities and needs vary across different user categories.

Human Oversight & Control

AI Act requirement: AI systems must be designed to allow effective human oversight and intervention (AI Act, Article 14).

User Story:

As a manufacturer of high-risk healthcare AI systems, I want to design my AI system with features that support effective human oversight, enabling trained personnel to monitor and intervene when necessary.

Product Requirements:

- The system shall provide an override function allowing users to disregard or reverse AI outputs.

- The system shall include an emergency STOP function to immediately halt operation if safety is compromised.

- The system shall display confidence thresholds and alert users when predictions fall below acceptable accuracy levels.

- The human‑machine interface shall present outputs as recommendations, not facts, and require explicit acknowledgement in predefined high‑risk scenarios to mitigate automation bias (AI Act, Article 14).

- The system shall provide contextual guidance enabling deployers to interpret outputs and apply appropriate human oversight measures (AI Act, Article 13 (3) (d) & Article 14).

Documentation Impact:

These requirements should be reflected in the manufacturer’s approved design and requirements documents (e.g., a requirements specification or design dossier), and supported with relevant risk analyses and usability validation activities.

Transparency & Documentation

AI Act requirement: AI systems must provide transparency and explainability to the user (AI Act, Article 13).

User Story:

As a manufacturer of healthcare AI systems, I want to maintain transparent documentation of my AI system’s capabilities, limitations, and intended use so users understand its operation and boundaries.

Product Requirements:

- The system shall provide explainability outputs (e.g., heatmaps in radiology, reasoning traces in natural language processing).

- The system shall notify users at the start of interaction that they are engaging with an AI system.

- The system shall generate machine-readable output labels marking content as AI-generated.

- The system shall present the overall expected level of accuracy for its intended purpose, including, where appropriate, accuracy levels for specific persons or groups on which the system is intended to be used (AI Act, Article 13; Annex IV §3).

- The system shall enforce input data specifications for the intended purpose (e.g., modality, acquisition parameters, age range) and block out‑of‑scope inputs with an explanatory message (AI Act, Article 13 (3) (vi)).

- The system shall surface limitations and foreseeable circumstances that may lead to risks or degraded performance at the point of use (AI Act, Article 13 (3) (iii)).

- The system shall display model/version information and pre‑determined changes when such changes affect performance (AI Act, Article 13 (2) (c); Annex IV §2 (f)).

Documentation Impact:

These requirements should be captured in the manufacturer’s approved design and requirements documentation (for example, requirements specifications or a design dossier, depending on the company's terminology) and supported by clinical evaluation evidence demonstrating the safe and effective use. In line with MDR, information supplied with the device (e.g., IFU) is considered part of the medical device. Still, functional warnings, accuracy displays, and in‑use messages must be implemented directly within the system itself. The IFU serves to document and explain these mechanisms, not to replace them.

Data Governance & Quality

AI Act requirement: Training, validation, and testing datasets must be relevant, representative, and free of bias and errors (AI Act, Article 10).

User Story:

As a manufacturer of high-risk healthcare AI systems, I want to ensure that my training, validation, and testing datasets are relevant, representative, and error-free, so the AI system operates accurately and reliably.

Product Requirements:

- All datasets must be documented in a Data Governance File, including provenance, cleaning, and quality checks.

- The system shall include input validation routines to prevent the use of corrupted or incomplete datasets.

- Validation studies must cover diverse populations to minimize bias across demographic groups (gender, age, ethnicity).

Documentation Impact:

Requirements should be included in the manufacturer’s approved design and requirements documentation, with particular emphasis on a structured Data Governance File and comprehensive validation evidence. Clinical evaluation and performance testing reports should demonstrate representativeness and bias mitigation.

Robustness & Resilience

AI Act requirement: AI systems must be resilient, reliable, and capable of handling errors or manipulation attempts (AI Act, Article 15).

User Story:

As a manufacturer of high-risk healthcare AI systems, I want to incorporate redundancy and error-handling mechanisms to ensure the system remains reliable despite errors or manipulation attempts.

Product Requirements:

- The system shall perform automated self-checks at startup and during operation.

- The system shall detect model drift and alert operators when retraining is needed.

- The system shall default to a safe state if critical errors occur.

- The system shall detect and reject anomalous or out‑of‑distribution inputs* (e.g., corrupted images, unsupported formats) and record such events (AI Act, Article 15).

- The system shall include protections against data poisoning and adversarial manipulation of inputs and models, including integrity verification of models and critical artefacts (AI Act, Article 15 – cybersecurity).

- The system shall enforce minimum performance thresholds at runtime; if performance drops below thresholds, it shall degrade gracefully or switch to an alternative safe workflow and notify the user (AI Act, Article 15).

Documentation Impact:

Robustness metrics, accuracy measures, and fallback behaviors should be included in the manufacturer’s approved design and requirements documentation. Verification and validation evidence must demonstrate predictable behavior in fault conditions, with defined accuracy levels and confidence thresholds. In addition, both company-level and system-level cybersecurity controls should be addressed as part of the supporting processes.

Logging & Record-Keeping

AI Act requirement: Comprehensive event logging is mandatory for compliance and audit purposes (AI Act, Article 12).

User Story:

As a manufacturer of high-risk healthcare AI systems, I want to implement detailed event logging so compliance evidence is available throughout the system lifecycle.

Product Requirements:

- The system shall automatically record events (logs) over its lifetime, with a level of traceability appropriate to the intended purpose (AI Act, Article 12 (1)-(2)).

- Logs shall include at minimum: timestamped start/end of each use, input/query metadata, data source accessed, user identity or role, model/version identifier and configuration, and the AI output/status (AI Act, Article 12 (2)).

- The system shall provide mechanisms for deployers to collect, store, and interpret logs (AI Act, Article 13 (3) (f)).

- Logs must be tamper‑evident and exportable for audit, and must integrate with the Post‑Market Surveillance (PMS) system.

Documentation Impact:

Logging requirements should be captured in the manufacturer’s approved design and requirements documentation (depending on company terminology). PMS plans and reports must demonstrate ongoing monitoring of log data.

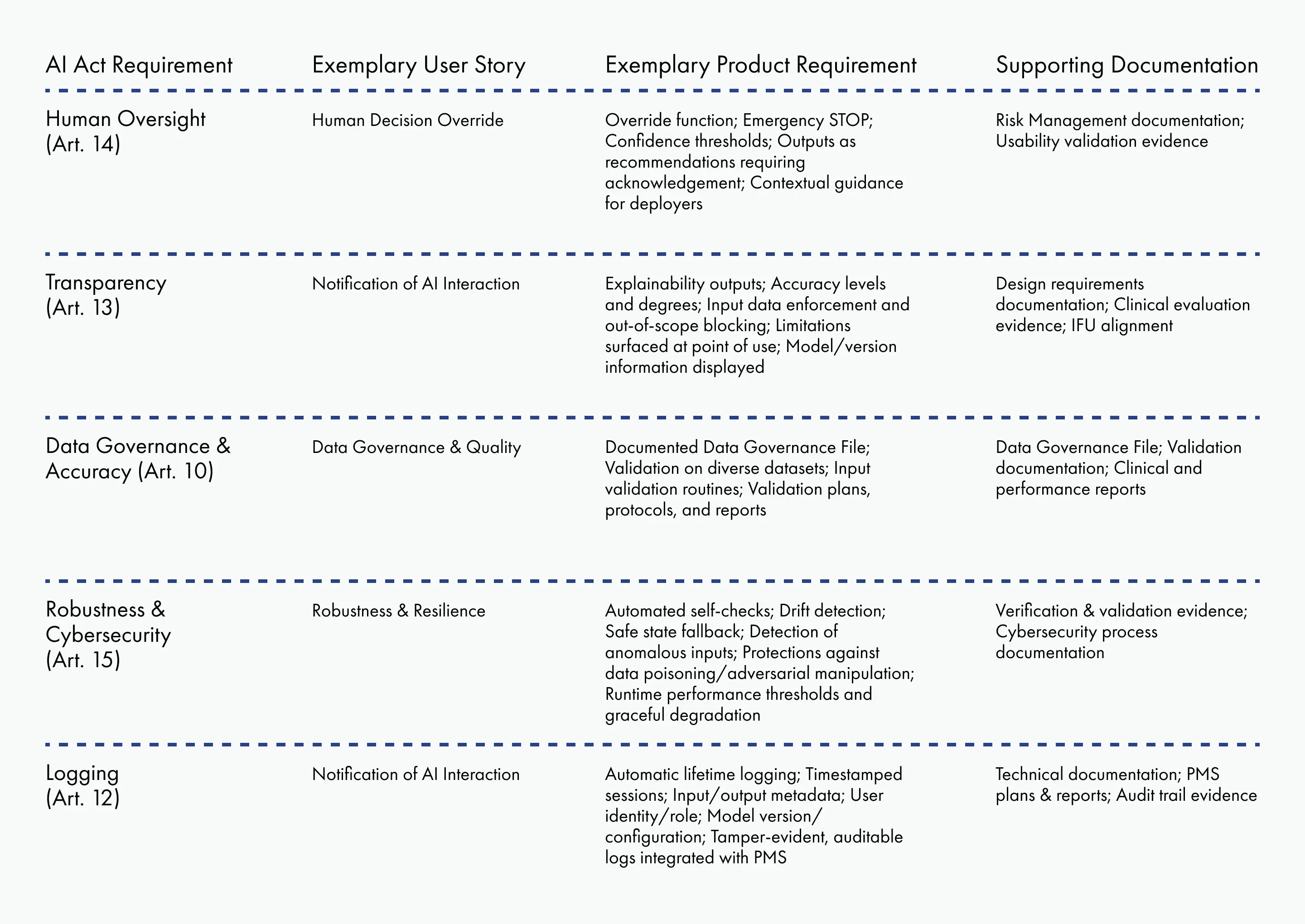

AI Compliance Matrix

To streamline traceability and simplify audits, manufacturers can establish an AI Compliance Matrix that maps AI Act requirements to design documentation.

This matrix, or a similar one, provides auditors and notified bodies with a clear cross-reference between regulations, user needs, product requirements, and technical documentation. It can also be expanded to include detailed references, document identifiers, version numbers, or other metadata to strengthen traceability.

Conclusion

The AI Act moves beyond broad principles by requiring high-risk AI systems, including AI-based medical devices, to demonstrate compliance with defined obligations. When these obligations are reframed as exemplary user stories and concrete product requirements, they fit naturally into established design and documentation processes. This approach turns abstract regulatory language into actionable guidance for software engineers, ensures consistency with MDR and ISO 13485, and provides manufacturers with a clear path to demonstrating conformity with the AI Act.